CRAFTing the Future: How Universities Can Navigate AI Adoption

CRAFTing the Future: How Universities Can Navigate AI Adoption

25 March 2026

Generative AI is a global challenge for universities, making it essential to look beyond our own borders. How are universities in different countries adapting their strategies? What frameworks and maturity models guide them? For this article, we talked with Danny Liu and Simon Bates, the authors of the CRAFT framework, to understand how their model can help universities navigate AI adoption.

It’s a dark winter morning at 6 a.m., and we, Theresa Sommer and Jens Tobor, are logging into the Zoom room, well outside our usual working hours. For our interview partners—Danny Liu in Australia and Simon Bates in Canada—it’s either the middle of the afternoon or late at night. Across time zones, we’ve gathered for the same reason: to explore how generative AI is reshaping universities worldwide. What follows is an hour-long conversation exploring how universities are adapting to generative AI, the challenges they face, and the strategies Liu and Bates believe will help institutions navigate this transformation.

In this article, we want to share some of these insights. It all started with the whitepaper that the Association of Pacific Rim Universities published in January 2025, titled “Generative AI in Higher Education: Current Practices and Ways Forward”. The report is the result of over a year of collaborative work between Liu and Bates with the APRU leadership and wider network, bringing together experts from universities across the Asia-Pacific. Through three dedicated workshops—on sensemaking, foresight, and creative sandboxing—participants explored how generative AI is transforming higher education and what strategies institutions can adopt.

The Association of Pacific Rim Universities (APRU) is a network of universities from across the Asia-Pacific region, dedicated to providing a platform for high-level policy dialogue and addressing global challenges through collaboration in education, research, and innovation. In 2023, the APRU launched the Generative AI in Higher Education project to explore AI’s role in shaping higher education, with support from Microsoft and Professor Simon Bates (UBC) as the project’s academic lead.

Generative AI as a Global Challenge

Why look beyond our own educational system in the first place? Because the challenges posed by generative AI are not confined to a single country or institution, they are global in scope, yet locally shaped. By examining how institutions across the world are approaching AI, we gain a broader perspective on what works, what doesn’t, and what we might learn from each other. How are universities worldwide approaching generative AI? How are institutional leaders responding? What lessons can we take away—and what challenges do we all share?

As it turns out, universities are navigating generative AI at vastly different speeds. Within the same institution, there are early adopters—technically skilled educators who instinctively experiment with AI to enhance teaching and learning—while others remain skeptical, cautious, or simply overwhelmed. “People need space to be able to discuss, talk about their concerns, and talk about their fears,” explains Simon Bates. Large public universities, in particular, must accommodate a wide range of perspectives, from those eager to experiment to those still uncertain about AI’s role in education. At the same time, generative AI is not a distant possibility—it is already here, reshaping how universities operate, and institutions must find ways to engage with it rather than avoid it.

A Structured Approach to AI: The CRAFT Framework

To help institutions do just that, Bates and Liu developed the CRAFT framework—not as a rigid blueprint, but as a “reflection tool and a conversation starter.” Rather than providing a single, prescriptive roadmap, the focus is on fostering discussions. What does AI mean for the future of education? How can universities ensure equitable access to AI tools and resources? How should it be integrated into curricula? What skills will students need in an AI-driven world?

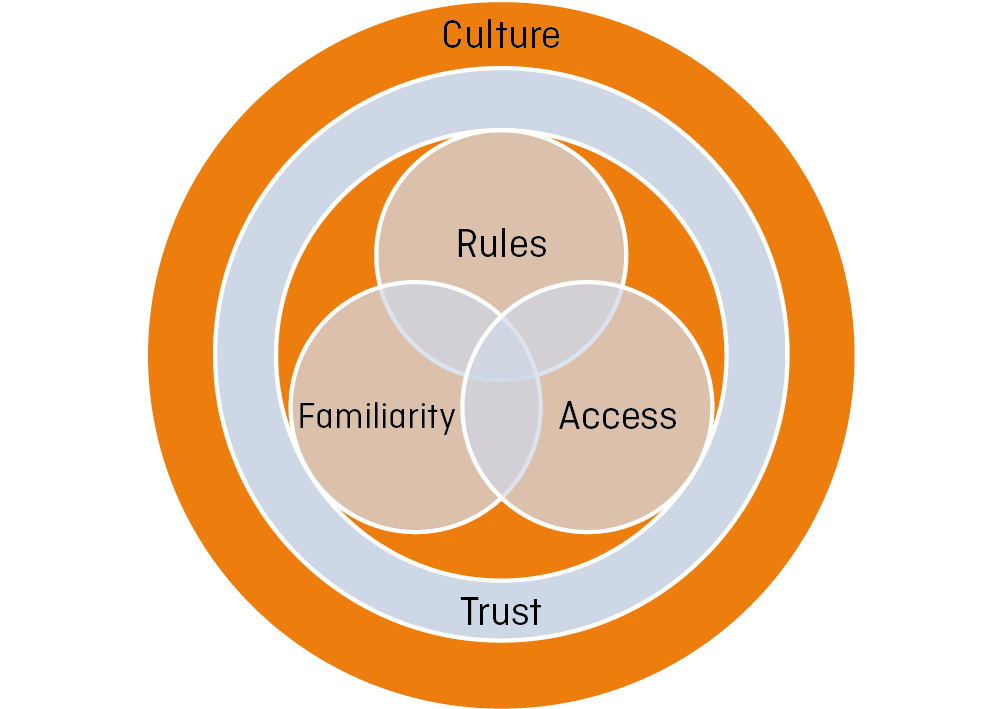

CRAFT stands for Culture, Rules, Access, Familiarity, and Trust—five interconnected elements that help institutions navigate both the opportunities and challenges AI presents. Rules, as Danny Liu puts it, should be “forward-looking,” guiding ethical AI use and ensuring academic integrity while allowing room for innovation and experimentation. Access ensures that AI tools and resources are equitably available, preventing disparities that could widen existing digital divides. Familiarity reflects how well students, faculty, and staff understand AI’s capabilities and limitations, making AI literacy essential for informed and responsible use.

These three pillars rest on trust, which is crucial for AI adoption. Without transparency in AI policies and decision-making, skepticism may grow, especially as universities face increasing public mistrust. Institutions must actively demonstrate the continued value of higher education in an AI-driven world—showing that AI is not a replacement for human expertise but a tool that can enhance learning, research, and institutional processes. Trust must also be built among students, faculty, and external partners by fostering open discussions about AI’s role and ensuring its use aligns with academic values and ethical standards.

Finally, all of this is shaped by culture, which influences how institutions perceive and implement AI. Institutional culture, disciplinary cultures, and regional or geographical cultures each bring their own lens to AI adoption, shaping attitudes, policies, and levels of engagement. While some people or groups may prioritize rapid experimentation, others may focus on risk mitigation or ethical concerns.

Time for Reflection… and to Take Action

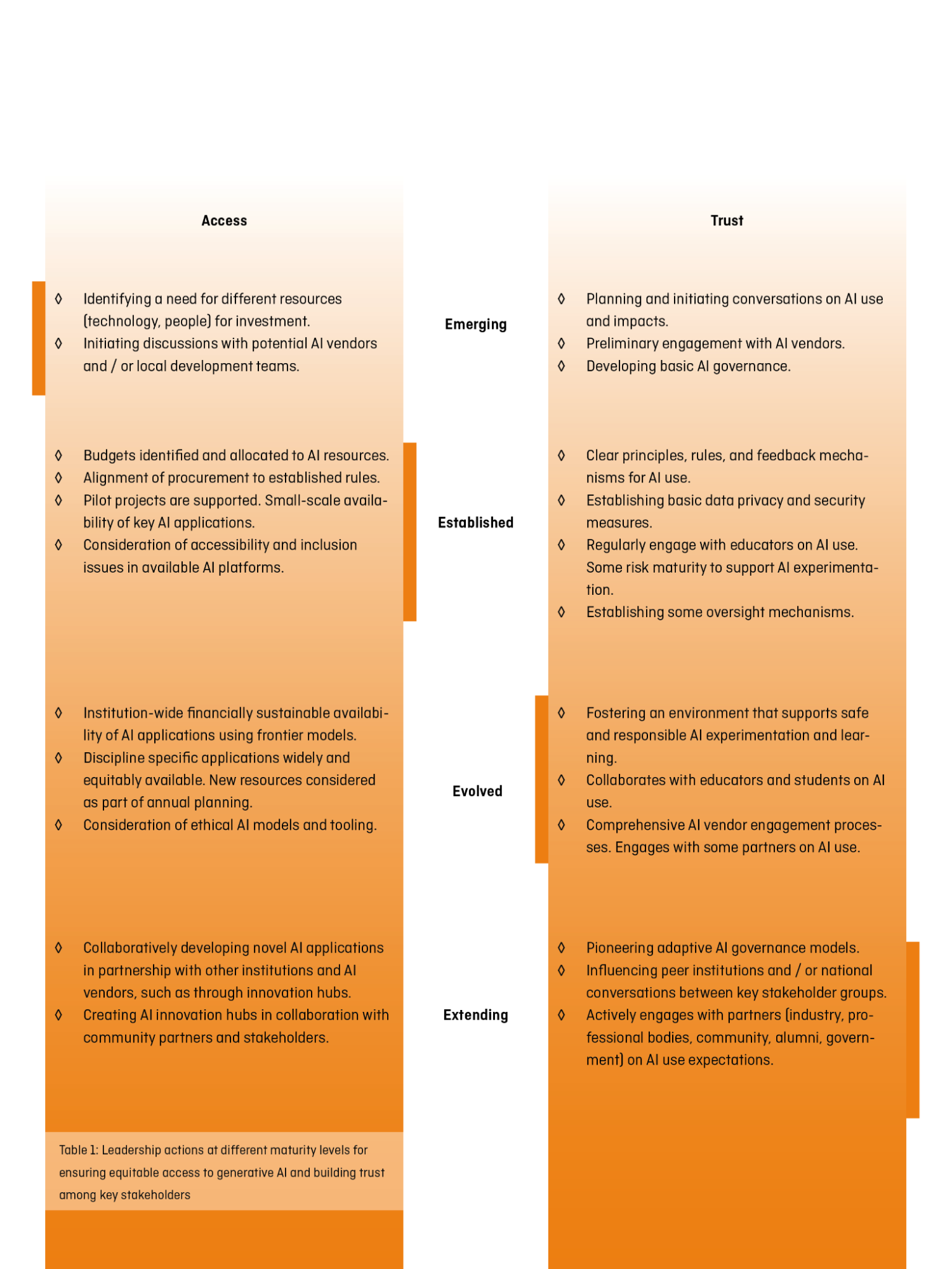

To support AI adoption at universities, the CRAFT framework includes rubrics for different university roles—leaders, educators, students, and researchers—each offering concrete reflection and action points tailored to their responsibilities and challenges. Table 1 provides a snapshot of actions for university leaders at different maturity levels, from emerging to extending, in the areas of trust and access. These rubrics don’t just outline where institutions are now, but also provide a vision of how AI integration could look like in the future. Rather than a rigid checklist or a judgment on progress, these rubrics can help individuals and universities assess where they are now and chart a course for where they want to go next.

A Call for Thoughtful AI Integration

As universities grapple with AI, a fundamental question emerges: How can they harness its potential without compromising their core social and educational mission? Bates and Liu emphasize that institutions must not allow themselves to be blinded by a purely technology-centric focus, but rather focus on the fundamental educational and ethical implications. “People were thinking, this is a technology problem. There must be a technology solution. It is very, very clear that many of the responses to these challenges are actually pedagogical in nature rather than technical”, says Simon Bates. The development of skills is strongly linked to the university as a social space – a centre for personal encounters, networking, joint research and social impact.

To protect this social core, universities must be mindful of a real danger: that an excessive focus on technology might erode the very aspects that make them vital. University leadership must, therefore, foster environments where diverse perspectives on AI can be negotiated and reconciled. The CRAFT framework provides guidance for this negotiation.

University management plays a crucial role in shaping this transformation. Institutions should establish spaces for safe experimentation and open exchange, where students and educators can collaboratively explore AI’s possibilities and risks. Targeted funding for innovative teaching formats and student AI projects can further encourage co-creation, ensuring that students are not passive recipients of AI policies and applications but active contributors to their design.

Yet, discussions alone are not enough. Insights from these negotiation spaces need to be translated into concrete actions and policies that support the implementation of culture, rules, access, familiarity and trust in the era of (generative) AI. AI adoption is not a one-size-fits-all process, and universities must assess their position, reflect on their needs, and take deliberate steps forward. As Danny Liu puts it, “It’s that kind of idea of action that can come from a reflection of where you’re at.” The goal isn’t to rush to the finish line—because, in reality, there isn’t one.

A Practical Example from the University of Sydney: How “Cogniti” Enables Course-Specific AI Agents

The University of Sydney developed Cogniti, a platform enabling educators to create custom AI agents tailored to specific courses to leverage the power of frontier AI models. This example demonstrates how trust, cultural shifts, and access are critical for effective AI adoption in education.

Trust is foundational to AI acceptance in education. Cogniti allows educators to have full control over their AI agents by giving plain-language instructions and supplying resources to ground the AI on course content. The platform ensures data privacy (e.g. no user data used for training, anonymized conversation summaries) and creates transparency that fosters faculty and student trust. This alignment with pedagogical goals overcomes the “black box” resistance common with general-purpose AI tools.

Cultural shifts occur when educators transform from passive consumers to active shapers of AI. With 1200+ educators using Cogniti to create 2800+ agents across 90+ institutions, this platform demonstrates how empowering faculty drives adoption and integration of AI into teaching practices.

Access proves critical as cost barriers to powerful frontier AI models risk creating and exacerbating educational inequities. Cogniti democratizes access, making advanced AI available regardless of individuals’ resources. LMS integration further streamlines adoption, as students can easily access the AI agents that their educators build for them right where they are accessing their learning resources.

Three Questions for Danny Liu und Simon Bates

In this conversation with Danny Liu and Simon Bates, we explore AI’s impact on higher education, the role of trust, and whether generative AI is truly a game changer for universities.

strategie digital: As part of the CRAFT framework, trust is described as the foundational layer upon which everything else is built. How does trust—or the lack of it—shape the way universities, educators and students interact?

Danny Liu: The more that we, Simon and I, thought about trust, the more we realised it’s actually a really big thing, institutionally, between lots of different stakeholder groups as well. That it’s this really fundamental barrier that is often in place, because, if we don’t trust students, then we will put in policing steps. If students don’t trust us, then they will hide the use of AI. If we don’t trust AI companies, then we’re going to, you know, not use them at all. If the university doesn’t trust their educators, then they’re going to put in really hard rules that don’t allow for experimentation.

strategie digital: How can universities transition from designing AI education for students to co-creating it with them?

Danny Liu: A finding from research on students and AI is that most students get their AI-related information from social media, rather than from the learning modules, webinars, or LMS/VLE resources universities provide. Educators are producing these webinars that no one goes to. And so one thing that we haven’t done yet. To shift from designing AI education for students to co-creating it with them, we plan to bring together a bunch of students who are pretty engaged with AI and pretty engaged with good learning processes. And we want them to basically create short, engaging content for social media. Shorts to go onto social media so that they can help tell their friends and their peers what does AI mean, and what are some good ways to use it. So it is about meeting students where they are and enabling them to educate one another.

Simon Bates: At the end of the day, what we think is useful for students doesn’t matter if they don’t find it valuable. If no one signs up for a 60-minute webinar on AI ethics, then we have clearly missed the mark. In that case, we are not truly meeting their needs or engaging them in meaningful ways, and ultimately, we are doing them a huge disservice. This is precisely why the idea of students as partners is so important and in my own work, I’ve actively involved students in designing and delivering course material. I see them as active agents in their education rather than passive recipients of knowledge. Given the challenges universities face—the scale and pace of change—it makes no sense to overlook our digitally savvy, highly engaged student community in helping us figure out how to do this.

strategie digital: Many people describe generative AI as a game changer for higher education. In what ways do you see AI fundamentally reshaping universities?

Simon Bates: It’s not the technology itself that’s the game changer, but how we integrate it. Its potential lies in how we use it within teaching, research, and university operations—not just in its existence. This technology doesn’t make educators redundant; in fact, it makes them more essential. All the parts of a university education that should not come from interacting with what is, at its core, a statistical model of text prediction. Faculty play a crucial role in identifying what shouldn’t be left to AI—those aspects of education that require human connection and critical engagement. The challenge is ensuring that these tools don’t short-circuit essential learning experiences. Otherwise, we risk graduating students who are highly proficient in using AI but lack other critical skills.

Danny Liu: Building on that, I’ve been thinking a lot about the idea of value—what is the true value of higher education? It’s not just about the final degree but the entire journey, the communities we build, and the way we shape individuals. I see value in two key dimensions: integrity value—ensuring that, for example, engineers can actually build safe bridges—and relevance value, which universities often neglect. AI has been a catalyst to force us to think about: what value do we bring to society, our communities, and our students.

At least in Australian higher education, we’ve been great at overloading curricula, stuffing them with content just in case it’s useful someday. Last year, I was having a chat with one of my colleagues Tim Fawns from Monash University and we came up with the idea of “educational hoarding”. You know, when you’re a hoarder at home, you keep things just in case they might be useful one day—like that random lid from a long-lost container. This reminds me of Marie Kondo, the decluttering guru. She asks people to reflect on what truly brings them joy and to let go of things that no longer serve them. In higher education, we struggle to do this. Instead of critically re-evaluating what is truly valuable, we just keep adding more. Maybe it’s time to embrace some academic decluttering—to focus on what truly matters in the student experience.

Interviewees

Danny Liu is a molecular biologist by training, programmer by night, researcher and faculty developer by day, and educator at heart. A Professor of Educational Technologies at the University of Sydney, he co-chairs the University’s AI in Education working group, and leads the Cogniti.ai initiative that puts educators in the driver’s seat of AI.

Simon Bates is Vice-Provost and Associate Vice-President, Teaching and Learning at UBC Vancouver, responsible for the academic leadership and support to faculty and departments creating credit and non-credit learning opportunities spanning areas of undergraduate, graduate and lifelong learning. He was the Academic Lead for the APRU project ‘Generative AI in Higher Education’.

Authors

Theresa Sommer is a project manager at Hochschulforum Digitalisierung and CHE Centrum für Hochschulentwicklung. She is responsible for the current issue of strategie digital as lead editor. Her work focuses on sustainable digitisation as well monitoring of digitisation-processes at universities.

Jens Tobor is a project manager at Hochschulforum Digitalisierung and CHE. He works at the implementation of generative ai in teaching and learning. He helps universities with their ai-induced transformation and works on the integration of ai-tools in teaching-, learning- and testing-scenarios.

Johanna Leifeld

Johanna Leifeld

Antonia Dittmann

Antonia Dittmann

Prof. Dr. Tobias Seidl

Prof. Dr. Tobias Seidl